iOS — How to Integrate Camera APIs using SwiftUI

Introduction

Today, we’ll show you how to seamlessly connect SwiftUI with Camera APIs, while simplifying the process of creating camera apps. We’ve focused on making the implementation user-friendly, ensuring that developers, whether new or experienced, can utilize this guide seamlessly.

This blog will serve as a comprehensive guide, starting from attaching a camera preview to your UI, along with covering essential features like flash support, focus control, zoom and camera switch capabilities, and the key process of capturing and saving preview images to your device.

This guide may appear lengthy, but I’m sure you’ll find it a valuable resource that simplifies the process of crafting camera-enabled applications, especially with SwiftUI.

Sponsored

We are what we repeatedly do. Excellence, then, is not an act, but a habit. Try out Justly and start building your habits today!

Get Started!

We will create a SwiftUI app to understand the basic camera APIs.

Here is the basic UI of the main camera screen which we are going to design throughout this blog,

I’ve taken the UI inspiration from this blog and tried to optimize the Camera API implementation to make it a more user-friendly and simplified experience with SwiftUI.

The source code is available on GitHub.

Camera Preview

First, add a new swift file with the name CameraPreview.swift and in that add a struct for the camera preview view.

Here is the code for the preview UI.

import SwiftUI

import AVFoundation // To access the camera related swift classes and methods

struct CameraPreview: UIViewRepresentable { // for attaching AVCaptureVideoPreviewLayer to SwiftUI View

let session: AVCaptureSession

// creates and configures a UIKit-based video preview view

func makeUIView(context: Context) -> VideoPreviewView {

let view = VideoPreviewView()

view.backgroundColor = .black

view.videoPreviewLayer.session = session

view.videoPreviewLayer.videoGravity = .resizeAspect

view.videoPreviewLayer.connection?.videoOrientation = .portrait

return view

}

// updates the video preview view

public func updateUIView(_ uiView: VideoPreviewView, context: Context) { }

// UIKit-based view for displaying the camera preview

class VideoPreviewView: UIView {

// specifies the layer class used

override class var layerClass: AnyClass {

AVCaptureVideoPreviewLayer.self

}

// retrieves the AVCaptureVideoPreviewLayer for configuration

var videoPreviewLayer: AVCaptureVideoPreviewLayer {

return layer as! AVCaptureVideoPreviewLayer

}

}

}UIViewRepresentable acts as a bridge to a UIKit’s UIView.

AVCaptureSession is an object that manages real-time capture of audio and video. It represents the session used to capture the live camera feed.

The remaining code sets up a UIKit-based view in SwiftUI, specifically a video preview view with customized settings.

Add Camera Manager

The Manager will be the main component to connect to the iPhone’s camera and capture the amazing photos and many more things you have been waiting for.

I’ve tried to follow the coding standards with the MVVM architecture and state management.

Let’s start by creating a manager class named CameraManager.swift and replacing the default code with the following code:

// this class conforms to ObservableObject to make it easier to use with future Combine code

class CameraManager: ObservableObject {

// Represents the camera's status

enum Status {

case configured

case unconfigured

case unauthorized

case failed

}

// Observes changes in the camera's status

@Published var status = Status.unconfigured

// AVCaptureSession manages the camera settings and data flow between capture inputs and outputs.

// It can connect one or more inputs to one or more outputs

let session = AVCaptureSession()

// AVCapturePhotoOutput for capturing photos

let photoOutput = AVCapturePhotoOutput()

// AVCaptureDeviceInput for handling video input from the camera

// Basically provides a bridge from the device to the AVCaptureSession

var videoDeviceInput: AVCaptureDeviceInput?

// Serial queue to ensure thread safety when working with the camera

private let sessionQueue = DispatchQueue(label: "com.demo.sessionQueue")

// Method to configure the camera capture session

func configureCaptureSession() {

sessionQueue.async { [weak self] in

guard let self, self.status == .unconfigured else { return }

// Begin session configuration

self.session.beginConfiguration()

// Set session preset for high-quality photo capture

self.session.sessionPreset = .photo

// Add video input from the device's camera

self.setupVideoInput()

// Add the photo output configuration

self.setupPhotoOutput()

// Commit session configuration

self.session.commitConfiguration()

// Start capturing if everything is configured correctly

self.startCapturing()

}

}

// Method to set up video input from the camera

private func setupVideoInput() {

do {

// Get the default wide-angle camera for video capture

// AVCaptureDevice is a representation of the hardware device to use

let camera = AVCaptureDevice.default(.builtInWideAngleCamera, for: .video, position: position)

guard let camera else {

print("CameraManager: Video device is unavailable.")

status = .unconfigured

session.commitConfiguration()

return

}

// Create an AVCaptureDeviceInput from the camera

let videoInput = try AVCaptureDeviceInput(device: camera)

// Add video input to the session if possible

if session.canAddInput(videoInput) {

session.addInput(videoInput)

videoDeviceInput = videoInput

status = .configured

} else {

print("CameraManager: Couldn't add video device input to the session.")

status = .unconfigured

session.commitConfiguration()

return

}

} catch {

print("CameraManager: Couldn't create video device input: \(error)")

status = .failed

session.commitConfiguration()

return

}

}

// Method to configure the photo output settings

private func setupPhotoOutput() {

if session.canAddOutput(photoOutput) {

// Add the photo output to the session

session.addOutput(photoOutput)

// Configure photo output settings

photoOutput.isHighResolutionCaptureEnabled = true

photoOutput.maxPhotoQualityPrioritization = .quality // work for ios 15.6 and the older versions

//photoOutput.maxPhotoDimensions = .init(width: 4032, height: 3024) // for ios 16.0*

// Update the status to indicate successful configuration

status = .configured

} else {

print("CameraManager: Could not add photo output to the session")

// Set an error status and return

status = .failed

session.commitConfiguration()

return

}

}

// Method to start capturing

private func startCapturing() {

if status == .configured {

// Start running the capture session

self.session.startRunning()

} else if status == .unconfigured || status == .unauthorized {

DispatchQueue.main.async {

// Handle errors related to unconfigured or unauthorized states

self.alertError = AlertError(title: "Camera Error", message: "Camera configuration failed. Either your device camera is not available or its missing permissions", primaryButtonTitle: "ok", secondaryButtonTitle: nil, primaryAction: nil, secondaryAction: nil)

self.shouldShowAlertView = true

}

}

}

// Method to stop capturing

func stopCapturing() {

// Ensure thread safety using `sessionQueue`.

sessionQueue.async { [weak self] in

guard let self else { return }

// Check if the capture session is currently running.

if self.session.isRunning {

// stops the capture session and any associated device inputs.

self.session.stopRunning()

}

}

}

}It may seem like there’s a lot to digest here 😅 but don’t worry, as you go through the code with those added comments, you will gain a clear understanding of each component step by step.

Add a View Model

To keep our code organized and clear, it’s advisable to create a separate view model. This view model will handle the core logic and provide the necessary data to our view for display.

We’ll eventually add some fairly intensive business logic around what will be displayed on the screen of the ContentViewto this view model class.

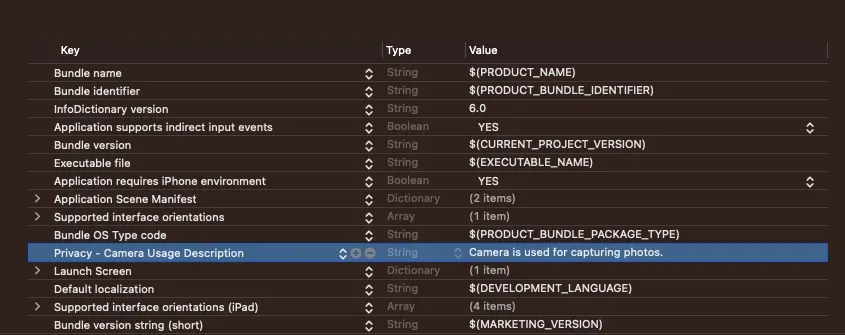

Requesting camera access permission

Privacy is one of Apple’s most touted pillars. Since Apple cares about users’ privacy, it only makes sense that the user needs to grant an app permission to use the camera.

So make sure to add a camera usage description in the project’s info.plist file as given in the below image.

Let’s create a new Swift file named CameraViewModel.swift. Then, replace the contents of that file with the following code:

class CameraViewModel: ObservableObject {

// Reference to the CameraManager.

@ObservedObject var cameraManager = CameraManager()

// Published properties to trigger UI updates.

@Published var isFlashOn = false

@Published var showAlertError = false

@Published var showSettingAlert = false

@Published var isPermissionGranted: Bool = false

var alertError: AlertError!

// Reference to the AVCaptureSession.

var session: AVCaptureSession = .init()

// Cancellable storage for Combine subscribers.

private var cancelables = Set<AnyCancellable>()

init() {

// Initialize the session with the cameraManager's session.

session = cameraManager.session

}

deinit {

// Deinitializer to stop capturing when the ViewModel is deallocated.

cameraManager.stopCapturing()

}

// Setup Combine bindings for handling publisher's emit values

func setupBindings() {

cameraManager.$shouldShowAlertView.sink { [weak self] value in

// Update alertError and showAlertError based on cameraManager's state.

self?.alertError = self?.cameraManager.alertError

self?.showAlertError = value

}

.store(in: &cancelables)

}

// Check for camera device permission.

func checkForDevicePermission() {

let videoStatus = AVCaptureDevice.authorizationStatus(for: AVMediaType.video)

if videoStatus == .authorized {

// If Permission granted, configure the camera.

isPermissionGranted = true

configureCamera()

} else if videoStatus == .notDetermined {

// In case the user has not been asked to grant access we request permission

AVCaptureDevice.requestAccess(for: AVMediaType.video, completionHandler: { _ in })

} else if videoStatus == .denied {

// If Permission denied, show a setting alert.

isPermissionGranted = false

showSettingAlert = true

}

}

// Configure the camera through the CameraManager to show a live camera preview.

func configureCamera() {

cameraManager.configureCaptureSession()

}

}This view model will manage all the camera-related operations for SwiftUI views. For now,

- It handles camera permissions, errors, and session configuration.

- Combine bindings to update UI based on the manager’s state changes.

- Ensures proper camera management and access.

Now, Let’s merge this data with the UI.

Design Camera Screen

We are going to design the screen like the default camera App. For that let’s update the code in the ContentView.swift file:

struct ContentView: View {

@ObservedObject var viewModel = CameraViewModel()

var body: some View {

GeometryReader { geometry in

ZStack {

Color.black.edgesIgnoringSafeArea(.all)

VStack(spacing: 0) {

Button(action: {

// Call method to on/off flash light

}, label: {

Image(systemName: viewModel.isFlashOn ? "bolt.fill" : "bolt.slash.fill")

.font(.system(size: 20, weight: .medium, design: .default))

})

.accentColor(viewModel.isFlashOn ? .yellow : .white)

CameraPreview(session: viewModel.session)

HStack {

PhotoThumbnail()

Spacer()

CaptureButton { // Call the capture method }

Spacer()

CameraSwitchButton { // Call the camera switch method }

}

.padding(20)

}

}

.alert(isPresented: $viewModel.showAlertError) {

Alert(title: Text(viewModel.alertError.title), message: Text(viewModel.alertError.message), dismissButton: .default(Text(viewModel.alertError.primaryButtonTitle), action: {

viewModel.alertError.primaryAction?()

}))

}

.alert(isPresented: $viewModel.showSettingAlert) {

Alert(title: Text("Warning"), message: Text("Application doesn't have all permissions to use camera and microphone, please change privacy settings."), dismissButton: .default(Text("Go to settings"), action: {

self.openSettings()

}))

}

.onAppear {

viewModel.setupBindings()

viewModel.checkForDevicePermission()

}

}

}

// use to open app's setting

func openSettings() {

let settingsUrl = URL(string: UIApplication.openSettingsURLString)

if let url = settingsUrl {

UIApplication.shared.open(url, options: [:])

}

}

}Let’s add the implementation of other views that we have used in the above design in the HStack.

struct PhotoThumbnail: View {

var body: some View {

Group {

// if we have Image then we'll show image

Image(uiImage: image)

.resizable()

.aspectRatio(contentMode: .fill)

.frame(width: 60, height: 60)

.clipShape(RoundedRectangle(cornerRadius: 10, style: .continuous))

// else just show black view

Rectangle()

.frame(width: 50, height: 50, alignment: .center)

.foregroundColor(.black)

}

}

}

}

struct CaptureButton: View {

var action: () -> Void

var body: some View {

Button(action: action) {

Circle()

.foregroundColor(.white)

.frame(width: 70, height: 70, alignment: .center)

.overlay(

Circle()

.stroke(Color.black.opacity(0.8), lineWidth: 2)

.frame(width: 59, height: 59, alignment: .center)

)

}

}

}

struct CameraSwitchButton: View {

var action: () -> Void

var body: some View {

Button(action: action) {

Circle()

.foregroundColor(Color.gray.opacity(0.2))

.frame(width: 45, height: 45, alignment: .center)

.overlay(

Image(systemName: "camera.rotate.fill")

.foregroundColor(.white))

}

}

}With these, we are done with the core camera screen and you can test the app now.

We’ll now tackle the remaining functionalities step by step

Manage the Flashlight

Now, we are going to enable the flashlight functionality. We’ll start by adding a property to the CameraManager class to track the flashlight's status (on or off).

@Published private var flashMode: AVCaptureDevice.FlashMode = .offTo toggle the flashlight mode, we’ll implement a method in the same class.

func toggleTorch(tourchIsOn: Bool) {

// Access the default video capture device.

guard let device = AVCaptureDevice.default(for: .video) else { return }

// Check if the device has a torch (flashlight).

if device.hasTorch {

do {

// Lock the device configuration for changes.

try device.lockForConfiguration()

// Set the flash mode based on the torchIsOn parameter.

flashMode = tourchIsOn ? .on : .off

// If torchIsOn is true, turn the torch on at full intensity.

if tourchIsOn {

try device.setTorchModeOn(level: 1.0)

} else {

// If torchIsOn is false, turn the torch off.

device.torchMode = .off

}

// Unlock the device configuration.

device.unlockForConfiguration()

} catch {

// Handle any errors during configuration changes.

print("Failed to set torch mode: \(error).")

}

} else {

print("Torch not available for this device.")

}

}This method toggles the flashlight of the device’s camera based on the torchIsOn parameter.

Now, update the CameraViewModel to call this method for flashlight control.

func switchFlash() {

isFlashOn.toggle()

cameraManager.toggleTorch(tourchIsOn: isFlashOn)

}Let’s link this method to a button in our view:

Button(action: {

viewModel.switchFlash()

}, label: {

Image(systemName: viewModel.isFlashOn ? "bolt.fill" : "bolt.slash.fill")

.font(.system(size: 20, weight: .medium, design: .default))

})

.accentColor(viewModel.isFlashOn ? .yellow : .white)Run the project to see it in action!

Focus on Tap

This feature allows users to tap on the camera preview to specify the focus and exposure point.

We’ll start by updating the CameraManager class with a function called setFocusOnTap which configures the camera to focus and expose at the point where the user tapped.

func setFocusOnTap(devicePoint: CGPoint) {

guard let cameraDevice = self.videoDeviceInput?.device else { return }

do {

try cameraDevice.lockForConfiguration()

// Check if auto-focus is supported and set the focus mode accordingly.

if cameraDevice.isFocusModeSupported(.autoFocus) {

cameraDevice.focusMode = .autoFocus

cameraDevice.focusPointOfInterest = devicePoint

}

// Set the exposure point and mode for auto-exposure.

cameraDevice.exposurePointOfInterest = devicePoint

cameraDevice.exposureMode = .autoExpose

// Enable monitoring for changes in the subject area.

cameraDevice.isSubjectAreaChangeMonitoringEnabled = true

cameraDevice.unlockForConfiguration()

} catch {

print("Failed to configure focus: \(error)")

}

}Let’s update the CameraViewModel class with a function to set the focus point based on user taps.

func setFocus(point: CGPoint) {

// Delegate focus configuration to the CameraManager.

cameraManager.setFocusOnTap(devicePoint: point)

}In the CameraPreview struct, we need to add a tap gesture recognizer. This allows users to tap the camera preview to specify the focus point.

class Coordinator: NSObject {

var parent: CameraPreview

init(_ parent: CameraPreview) {

self.parent = parent

}

@objc func handleTapGesture(_ sender: UITapGestureRecognizer) {

let location = sender.location(in: sender.view)

parent.onTap(location)

}

}Let’s implement the makeCoordinator function to create a coordinator instance,

func makeCoordinator() -> Coordinator {

Coordinator(self)

}Next, we modify the makeUIView function with gesture recognition onTap closure to incorporate tap gesture recognition.

var onTap: (CGPoint) -> Void // handle the user's tap actions

func makeUIView(context: Context) -> VideoPreviewView {

let view = VideoPreviewView()

view.backgroundColor = .black

view.videoPreviewLayer.session = session

view.videoPreviewLayer.videoGravity = .resizeAspect

view.videoPreviewLayer.connection?.videoOrientation = .portrait

// Add a tap gesture recognizer to the view

let tapGesture = UITapGestureRecognizer(target: context.coordinator, action: #selector(context.coordinator.handleTapGesture(_:)))

view.addGestureRecognizer(tapGesture)

return view

}Now we’ll dive into updating the View layer to make use of the tap gesture support.

Let’s update the CameraPreview view with the given code for getting tap,

@State private var isFocused = false

@State private var focusLocation: CGPoint = .zero

CameraPreview(session: viewModel.session) { tapPoint in

isFocused = true

focusLocation = tapPoint

viewModel.setFocus(point: tapPoint)

// provide haptic feedback to enhance the user experience

UIImpactFeedbackGenerator(style: .medium).impactOccurred()

}Provide visual feedback for focus preview

We introduce visual feedback for focus adjustments when users tap on the camera preview. This enhancement provides a clear and interactive way to specify the desired focus point.

@State private var isScaled = false // To scale the view

// Note: Add this view below the end of CameraPreview view

// by wrapping both in the Zstack to show focus view above the preview

// Show the FocusView when focus adjustments are in progress

if isFocused {

FocusView(position: $focusLocation)

.scaleEffect(isScaled ? 0.8 : 1)

.onAppear {

// Add a springy animation effect for visual appeal.

withAnimation(.spring(response: 0.4, dampingFraction: 0.6, blendDuration: 0)) {

self.isScaled = true

// Return to the default state after 0.6 seconds for an elegant user experience.

DispatchQueue.main.asyncAfter(deadline: .now() + 0.6) {

self.isFocused = false

self.isScaled = false

}

}

}

}Here’s the FocusView implementation,

struct FocusView: View {

@Binding var position: CGPoint

var body: some View {

Circle()

.frame(width: 70, height: 70)

.foregroundColor(.clear)

.border(Color.yellow, width: 1.5)

.position(x: position.x, y: position.y) // To show view at the specific place

}

}Now you’re ready to run your app.

Zoom in/out with a pinch

To enable pinch-to-zoom functionality in our app, we need to make a few given updates.

Let’s start by making the necessary updates in the CameraManager class with a function called setZoomScale.

func setZoomScale(factor: CGFloat){

// Ensure we have a valid video device input.

guard let device = self.videoDeviceInput?.device else { return }

do {

try device.lockForConfiguration()

// Ensure we stay within a valid zoom range

device.videoZoomFactor = max(device.minAvailableVideoZoomFactor, max(factor, device.minAvailableVideoZoomFactor))

device.unlockForConfiguration()

} catch {

print(error.localizedDescription)

}

}Next, we’ll call this method from the ViewModel to control the zoom operations from view.

func zoom(with factor: CGFloat) {

cameraManager.setZoomScale(factor: factor)

}Lastly, let’s update the ContentView to reflect the zoom adjustments based on pinch gestures.

// Store the current zoom factor

@State private var currentZoomFactor: CGFloat = 1.0

CameraPreview(session: viewModel.session) { tapPoint in

isFocused = true

focusLocation = tapPoint

viewModel.setFocus(point: tapPoint)

UIImpactFeedbackGenerator(style: .medium).impactOccurred()

}

.gesture(MagnificationGesture() // Apply a MagnificationGesture to the CameraPreview

.onChanged { value in

// Calculate the change in zoom factor

self.currentZoomFactor += value - 1.0

// Ensure the zoom factor stays within a specific range

self.currentZoomFactor = min(max(self.currentZoomFactor, 0.5), 10)

// Call a method to update the zoom level

self.viewModel.zoom(with: currentZoomFactor)

})With running the app, simply pinch to zoom in, and release to zoom out. This feature ensures users can creatively adjust their camera’s focus, enhancing the overall photography experience.

Switch Front and Back Camera

Switching between the front and back cameras is a handy feature for enhancing your custom camera app.

First, we update the CameraManager class by adding a new function called switchCamera to handle camera switching:

var position: AVCaptureDevice.Position = .back // to store current position

func switchCamera() {

// Check if a current video input exists

guard let videoDeviceInput else { return }

// Remove the current video input

session.removeInput(videoDeviceInput)

// Set up the new video input

setupVideoInput()

}In the ViewModel, add a switchCamera function to facilitate camera switching:

func switchCamera() {

cameraManager.position = cameraManager.position == .back ? .front : .back

cameraManager.switchCamera()

}Now, let’s update the CameraSwitchButton view’s button action,

CameraSwitchButton { viewModel.switchCamera() }With this, users can effortlessly switch between front and back cameras according to their preferences.

Test the app to ensure the feature works as expected.

Capture and Save Image

In this section, we will walk through the implementation of the core functionality of the camera app.

Let’s begin with enhancing the CameraManager class to capture and manage images effectively:

Create a CameraDelegate Class

This class will conform to AVCapturePhotoCaptureDelegate and execute the necessary steps to process and save the image:

class CameraDelegate: NSObject, AVCapturePhotoCaptureDelegate {

private let completion: (UIImage?) -> Void

init(completion: @escaping (UIImage?) -> Void) {

self.completion = completion

}

func photoOutput(_ output: AVCapturePhotoOutput, didFinishProcessingPhoto photo: AVCapturePhoto, error: Error?) {

if let error {

print("CameraManager: Error while capturing photo: \(error)")

completion(nil)

return

}

if let imageData = photo.fileDataRepresentation(), let capturedImage = UIImage(data: imageData) {

saveImageToGallery(capturedImage)

completion(capturedImage)

} else {

print("CameraManager: Image not fetched.")

}

}

func saveImageToGallery(_ image: UIImage) {

PHPhotoLibrary.shared().performChanges {

PHAssetChangeRequest.creationRequestForAsset(from: image)

} completionHandler: { success, error in

if success {

print("Image saved to gallery.")

} else if let error {

print("Error saving image to gallery: \(error)")

}

}

}

}This class manages the image capture process and saves the images to the gallery.

Update the CameraManager Class

@Published var capturedImage: UIImage? = nil

private var cameraDelegate: CameraDelegate?

func captureImage() {

sessionQueue.async { [weak self] in

guard let self else { return }

// Configure photo capture settings

var photoSettings = AVCapturePhotoSettings()

// Capture HEIC photos when supported

if photoOutput.availablePhotoCodecTypes.contains(.hevc) {

photoSettings = AVCapturePhotoSettings(format: [AVVideoCodecKey: AVVideoCodecType.hevc])

}

// Sets the flash mode for the capture

if self.videoDeviceInput!.device.isFlashAvailable {

photoSettings.flashMode = self.flashMode

}

photoSettings.isHighResolutionPhotoEnabled = true

// Specify photo quality and preview format

if let previewPhotoPixelFormatType = photoSettings.availablePreviewPhotoPixelFormatTypes.first {

photoSettings.previewPhotoFormat = [kCVPixelBufferPixelFormatTypeKey as String: previewPhotoPixelFormatType]

}

photoSettings.photoQualityPrioritization = .quality

if let videoConnection = photoOutput.connection(with: .video), videoConnection.isVideoOrientationSupported {

videoConnection.videoOrientation = .portrait

}

cameraDelegate = CameraDelegate { [weak self] image in

self?.capturedImage = image

}

if let cameraDelegate {

// Capture the photo with delegate

self.photoOutput.capturePhoto(with: photoSettings, delegate: cameraDelegate)

}

}

}This function sets up the capture settings for photos, and by using the AVCapturePhotoOutput, you can capture high-quality images.

ViewModel Updates

In the ViewModel, we’ll add a function to trigger image capture and handle permissions:

@Published var capturedImage: UIImage?

// add new closure for getting updated values form the manager class publishers

func setupBindings() {

.

.

.

cameraManager.$capturedImage.sink { [weak self] image in

self?.capturedImage = image

}.store(in: &cancelables)

}

// Call when the capture button tap

func captureImage() {

requestGalleryPermission()

let permission = checkGalleryPermissionStatus()

if permission.rawValue != 2 {

cameraManager.captureImage()

}

}

// Ask for the permission for photo library access

func requestGalleryPermission() {

PHPhotoLibrary.requestAuthorization { status in

switch status {

case .authorized:

break

case .denied:

self.showSettingAlert = true

default:

break

}

}

}

func checkGalleryPermissionStatus() -> PHAuthorizationStatus {

return PHPhotoLibrary.authorizationStatus()

}The captureImage function checks gallery permissions, and upon successful capture, we can handle the image according to our requirements.

We’ve requested permission to access the user’s photo library using the PHPhotoLibrary framework. Typically, this permission should be requested when the user interacts with the feature for the first time.

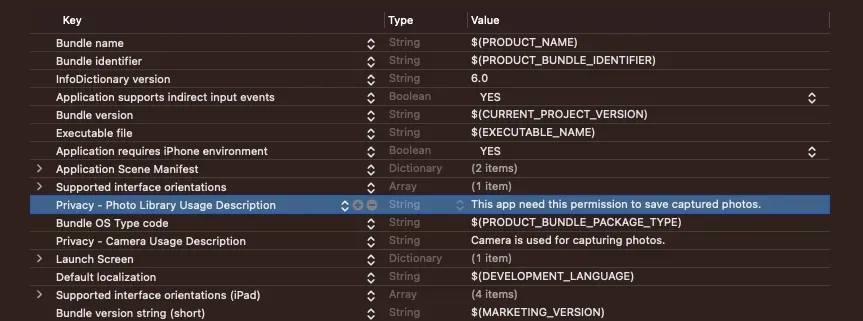

Requesting Gallery Permissions

Before capturing or saving images to the gallery, we need to request photo library permission. This ensures that our camera app complies with privacy and security best practices.

Make sure to add a photo library usage description in the project’s info.plist file as given in the below image,

View Changes

To represent the captured image and offer a button for image capture, make the following updates:

// Add action to the CaptureButton inside the HStack

CaptureButton { viewModel.captureImage() }

// Update the view for photo thumbnail view

struct PhotoThumbnail: View {

@Binding var image: UIImage?

var body: some View {

Group {

if let image {

Image(uiImage: image)

.resizable()

.aspectRatio(contentMode: .fill)

.frame(width: 60, height: 60)

.clipShape(RoundedRectangle(cornerRadius: 10, style: .continuous))

} else {

Rectangle()

.frame(width: 50, height: 50, alignment: .center)

.foregroundColor(.black)

}

}

}

}Let’s test the app to ensure smooth image capture and gallery-saving capabilities.

With that, We are done with our basic custom camera configurations.

Conclusion

Building a camera app might seem challenging initially. To make it easier, I’ve prepared a detailed but complete and beginner-friendly guide on crafting a basic SwiftUI camera app.

Our journey doesn’t end here. In our upcoming blog series, we’ll delve into advanced camera features. Imagine adjusting exposure, fine-tuning color temperature, switching lenses, altering resolutions, and many more.

Stay tuned for more updates and enhancements!

Related Popular Articles

Let's Work Together

Not sure where to start? We also offer code and architecture reviews, strategic planning, and more.